Generative Models in Biological and Cosmic Systems: Unveiling the Patterns of Life and the Universe

The exploration of complex systems, whether they be the intricate machinery of a living cell or the vast expanse of the cosmos, has long been a cornerstone of scientific inquiry. Understanding how these systems evolve, adapt, and maintain their structure demands sophisticated tools and novel analytical frameworks. In recent years, generative models have emerged as a powerful class of computational tools capable of learning underlying patterns and distributions within data, and crucially, generating new, plausible data that adheres to these learned characteristics. This capability has proven invaluable in dissecting the intricate architectures of biological organisms and the fundamental laws governing the universe. By modeling the processes that give rise to these systems, scientists can gain deeper insights into their origins, predict their future states, and even design novel entities with desired properties. This article will delve into the applications of generative models across these seemingly disparate yet fundamentally interconnected realms, highlighting their capacity to unveil the inherent patterns of life and the universe.

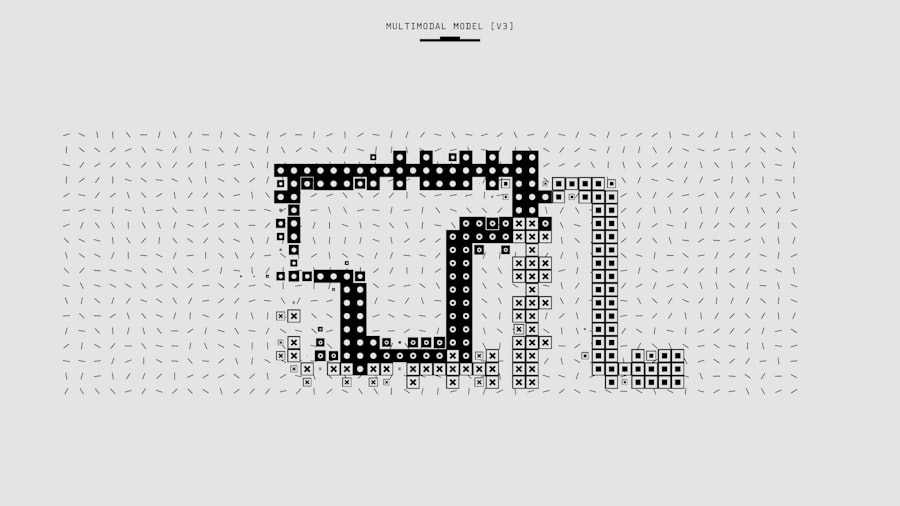

At its core, a generative model aims to learn the probability distribution of a given dataset. Unlike discriminative models, which focus on predicting a label based on input features (e.g., classifying an image as a cat or a dog), generative models attempt to understand how the data itself is generated. This involves learning the underlying factors and relationships that give rise to observed phenomena.

Types of Generative Models

Various architectural designs and probabilistic approaches fall under the umbrella of generative models, each with its strengths and weaknesses suited to different data types and tasks.

Variational Autoencoders (VAEs)

Variational Autoencoders are a type of generative model that uses an autoencoder architecture with a probabilistic twist. An autoencoder consists of an encoder that compresses input data into a lower-dimensional latent representation and a decoder that reconstructs the data from this latent representation. In VAEs, the encoder outputs parameters (mean and variance) of a probability distribution for the latent variables, rather than a single point. This probabilistic encoding allows for sampling from the latent space, enabling the generation of novel data by sampling from the learned distribution and passing it through the decoder. The objective function of a VAE balances reconstruction accuracy with the regularization of the latent space, ensuring it is well-structured and continuous, which facilitates smooth transitions and interpolations between generated samples.

Generative Adversarial Networks (GANs)

Generative Adversarial Networks consist of two neural networks: a generator and a discriminator, trained in a zero-sum game. The generator’s task is to create synthetic data that resembles the real data, while the discriminator’s task is to distinguish between real and generated data. During training, the generator attempts to fool the discriminator by producing increasingly realistic outputs, and the discriminator improves its ability to detect fakes. This adversarial process drives the generator to produce highly convincing samples that capture the underlying distribution of the training data. GANs have shown remarkable success in generating diverse and high-fidelity data, particularly in image synthesis.

Flow-based Generative Models

Flow-based models learn an invertible mapping between a simple, tractable distribution (like a Gaussian) and the complex data distribution. This inversion allows for exact likelihood computation and efficient sampling. The core idea is to transform a simple random variable into a complex one through a sequence of invertible transformations. By composing these reversible transformations, complex data distributions can be learned and sampled from. Their exact likelihood computation makes them suitable for tasks requiring probabilistic inference.

Diffusion Models

Diffusion models are a more recent class of generative models that have achieved state-of-the-art results in image generation. They operate by gradually adding noise to data until it becomes pure noise, and then learning to reverse this process by iteratively removing noise. This denoising process, when applied to random noise, can generate new data samples. The step-by-step nature of diffusion allows for fine-grained control over the generation process and has led to highly realistic and diverse outputs.

The Concept of Latent Space

A crucial aspect of many generative models is the concept of a latent space. This is a lower-dimensional, abstract representation of the data where underlying features and relationships are encoded. By manipulating points within this latent space, researchers can explore variations and generate novel data instances that exhibit specific characteristics present in the training set. The structure and interpretability of the latent space are key areas of ongoing research.

Generative models have shown great promise in both biological and cosmic systems, enabling researchers to simulate complex processes and predict outcomes with remarkable accuracy. For a deeper understanding of how these models are applied in various fields, you can explore a related article that discusses their implications and advancements in detail. To read more about this fascinating intersection of technology and science, visit this article.

Generative Models in Biological Systems: Simulating Life’s Complexity

The intricate processes governing life, from the molecular interactions within a cell to the evolutionary dynamics of populations, present formidable challenges for traditional modeling approaches. Generative models offer a promising avenue to unravel these complexities by learning the probabilistic rules that dictate biological phenomena.

Modeling Molecular and Cellular Processes

The vastness of the molecular “parts list” of a cell and the combinatorial possibilities of their interactions make direct simulation computationally intractable. Generative models can learn from experimental data, such as protein interaction networks or gene expression profiles, to infer plausible molecular architectures and dynamic behaviors.

Protein Design and Engineering

Proteins are the workhorses of biological systems, performing a diverse array of functions. Generative models, particularly GANs and VAEs, are being employed to design novel protein sequences with desired structural or functional properties. By training on databases of known protein structures and sequences, these models can learn the rules that govern protein folding and function, and subsequently generate sequences predicted to fold into specific shapes or exhibit targeted enzymatic activity. This has implications for drug discovery, enzyme engineering, and the development of novel biomaterials.

Gene Regulatory Network Inference

Gene regulatory networks (GRNs) govern the complex interplay of genes and their products, dictating cellular identity and responses. Inferring the structure and dynamics of these networks from gene expression data is a challenging inverse problem. Generative models can be used to learn the probabilistic relationships between gene expression levels over time, effectively modeling the underlying regulatory logic. This allows for the prediction of gene function, identification of key regulatory nodes, and the simulation of cellular responses to perturbations.

Understanding Cellular States and Differentiation

Cells exist in a multitude of states, defined by their gene expression profiles and functional activities. Generative models can learn to represent the continuous spectrum of cellular states, including rare or transient states that are difficult to capture experimentally. This is particularly relevant for studying cell differentiation, where cells transition from less specialized to more specialized states. VAEs, for instance, can map single-cell RNA sequencing data into a low-dimensional latent space where trajectories of differentiation can be visualized and analyzed.

Microbiome Composition and Function

The human microbiome, a complex ecosystem of microorganisms, plays a critical role in health and disease. Generative models can be applied to analyze the vast and diverse data generated from microbiome studies, such as 16S rRNA sequencing or shotgun metagenomics. They can learn to predict plausible community structures, identify associations between microbial species and host phenotypes, and even simulate the impact of interventions, like probiotic administration, on the microbiome’s composition and functional output.

Evolutionary Dynamics and Population Genetics

Evolutionary processes, driven by mutation, selection, and drift, shape the diversity of life over time. Generative models can be used to simulate these processes and explore hypotheses about evolutionary history.

Simulating Evolutionary Trajectories

By learning from genomic data of different species or populations, generative models can learn the underlying mutation rates, fitness landscapes, and demographic histories. This allows for the simulation of evolutionary trajectories, enabling researchers to test hypotheses about the drivers of adaptation, the emergence of new traits, or the patterns of genetic variation observed in extant populations.

Population Structure and Ancestry Inference

Generative models can infer population structure and historical demography from genomic data. They can learn the patterns of genetic admixture and divergence that reflect past migrations and population bottlenecks, providing insights into human history and the evolution of other species.

Generative Models in Cosmic Systems: Decoding the Universe’s Blueprint

The universe, in its immense scale and complexity, presents a different set of challenges and opportunities for generative modeling. From the formation of galaxies to the distribution of dark matter, generative models can help us understand the fundamental physics and initial conditions that shaped the cosmos.

Cosmological Simulations and Data Generation

Cosmological simulations are computationally intensive endeavors that aim to model the evolution of the universe from the Big Bang to the present day. Generative models can augment these simulations or learn from observational data to make predictions.

Generating Realistic Galaxy Distributions

Observational surveys, such as the Sloan Digital Sky Survey (SDSS) or the upcoming Vera C. Rubin Observatory, collect vast amounts of data on the positions and properties of galaxies. Generative models can learn the statistical properties of these observed galaxy distributions, including their clustering and morphology. This allows for the generation of synthetic mock catalogs that can be used to test analysis pipelines, validate cosmological parameters, and explore the uncertainties in our understanding of large-scale structure.

Modeling Dark Matter and Dark Energy

The nature of dark matter and dark energy remains one of the most profound mysteries in cosmology. While their gravitational effects are observable, their fundamental composition is unknown. Generative models, trained on cosmological simulations that incorporate different theoretical models of dark matter and dark energy, can learn the observable consequences of these components. This can aid in the design of future experiments and the interpretation of existing observational data, helping to constrain theoretical models and potentially reveal the underlying physics.

Understanding Cosmic Structure Formation

The hierarchical formation of cosmic structures, from small dark matter halos to massive galaxy clusters, is a complex process driven by gravity. Generative models, trained on output from sophisticated N-body simulations, can learn the statistical relationships between initial conditions and the resulting large-scale structure. This allows for faster exploration of parameter space and the generation of mock universes that mimic the complexity of the real one.

Analyzing Astronomical Observations

Astronomical observations are often noisy and incomplete. Generative models can help to “fill in the gaps” and extract meaningful information from this data.

Image Inpainting and Super-Resolution for Astronomical Images

Telescopes often capture images with missing data due to instrumental limitations or cosmic ray impacts. Generative models can be used for image inpainting, filling in these missing regions in a plausible manner consistent with the surrounding data. Furthermore, super-resolution techniques powered by generative models can enhance the detail in low-resolution astronomical images, revealing finer structures that would otherwise be obscured.

Anomaly Detection in Astronomical Data

The universe is a vast and potentially surprising place. Generative models can be trained on large datasets of “normal” astronomical objects or phenomena. Deviations from this learned normality, detected as low-probability samples from the generative model, can then be flagged as potential anomalies, guiding astronomers to investigate rare or unexpected cosmic events.

Predicting Stellar Evolution and Supernovae

The life cycles of stars are governed by complex physical processes. Generative models can be trained on observational data and theoretical models of stellar evolution to predict the future states of stars, including their eventual demise as supernovae. This can aid in the prediction of transient astronomical events and the understanding of nucleosynthesis, the cosmic origin of elements.

Bridging the Gap: Common Principles and Interdisciplinary Insights

Despite the apparent differences in scale and complexity, biological and cosmic systems share fundamental organizational principles that generative models can help to illuminate. Both are emergent phenomena, shaped by underlying physical laws and stochastic processes, and both exhibit intricate patterns of organization and adaptation.

Universal Patterns of Self-Organization

Both biological and cosmic systems demonstrate self-organization, where local interactions lead to the emergence of complex global patterns without explicit external direction. Generative models can learn the rules governing these local interactions and simulate how they give rise to ordered structures, whether it be the intricate folding of a protein or the formation of filamentary structures in the cosmic web.

The Role of Stochasticity and Noise

Stochasticity, or randomness, plays a crucial role in both biological evolution and cosmic evolution. In biology, random mutations drive innovation, while in cosmology, quantum fluctuations in the early universe are believed to have seeded the large-scale structure. Generative models that explicitly incorporate or learn from noise can better capture the probabilistic nature of these processes.

Information Processing and Transmission

Both biological organisms and the universe itself can be viewed as complex information processing systems. Genes transmit genetic information, and cosmic structures encode information about the universe’s history and composition. Generative models can help us understand how information is encoded, transformed, and transmitted within these systems.

Benchmarking and Validation Across Domains

The successful application of generative models in one domain can often inspire new approaches in another. The techniques developed for generating photorealistic images might find analogous applications in visualizing complex molecular interactions, and conversely, the principles of evolutionary dynamics in biology could offer insights into the long-term behavior of cosmic structures.

Recent advancements in generative models have opened new avenues for understanding complex systems in both biology and cosmology. These models, which can simulate and predict patterns in data, are proving invaluable in fields ranging from genetics to astrophysics. For a deeper exploration of how these innovative techniques are being applied to unravel the mysteries of the universe, you can read more in this insightful article on cosmic ventures. The intersection of these disciplines highlights the potential of generative models to transform our comprehension of life and the cosmos.

Challenges and Future Directions

| System | Generative Model | Metrics |

|---|---|---|

| Biological | Genetic algorithms | Diversity, fitness, mutation rate |

| Biological | Neural networks | Accuracy, loss, learning rate |

| Cosmic | Galaxy formation models | Mass distribution, star formation rate |

| Cosmic | Cosmological simulations | Dark matter distribution, cosmic web structure |

While the applications of generative models in biology and cosmology are rapidly advancing, several challenges remain.

Interpretability and Explainability

Many powerful generative models, particularly deep learning-based ones, operate as “black boxes.” Understanding why a model generates a particular output or what specific features it has learned can be difficult. Enhancing the interpretability of these models is crucial for building trust and gaining deeper scientific understanding.

Data Requirements and Bias

Generative models often require large, high-quality datasets for effective training. Biases present in the training data can be amplified by the model, leading to skewed or inaccurate generations. Developing methods to mitigate data bias and leverage smaller or more heterogeneous datasets is an ongoing area of research.

Computational Resources

Training and deploying complex generative models can be computationally demanding, requiring significant hardware resources and energy. Developing more efficient algorithms and architectures is essential for broader accessibility and application.

Domain-Specific Model Architectures

While general-purpose generative architectures exist, tailoring models to the specific characteristics of biological or cosmic data, such as incorporating physical constraints or known symmetries, can lead to more accurate and robust results.

Ethical Considerations and Responsible Deployment

As generative models become more capable of creating realistic synthetic data, ethical considerations regarding potential misuse, such as the generation of misleading scientific results or deepfakes, become increasingly important. Responsible development and deployment practices are paramount.

In conclusion, generative models have emerged as transformative tools in the scientific endeavor, offering unprecedented capabilities for understanding and interacting with complex systems. From unraveling the intricate molecular choreography of life to decoding the grand cosmic tapestry, these models are pushing the boundaries of scientific knowledge. By learning the underlying patterns and distributions that govern these domains, scientists can generate new hypotheses, design novel entities, and forge deeper insights into the fundamental nature of reality, both within ourselves and across the vast expanse of the universe. The ongoing development and application of generative models promise to unlock further secrets, revealing the beautiful and intricate blueprints upon which life and the cosmos are built.

FAQs

What are generative models?

Generative models are a class of machine learning models that are used to generate new data samples from a given dataset. These models learn the underlying patterns and structures of the data in order to create new, realistic samples.

How are generative models used in biological systems?

In biological systems, generative models are used to simulate and predict the behavior of biological processes, such as protein folding, gene expression, and neural activity. These models help researchers understand the complex dynamics of biological systems and make predictions about their behavior.

How are generative models used in cosmic systems?

In cosmic systems, generative models are used to simulate and predict the behavior of celestial objects, such as galaxies, stars, and black holes. These models help astronomers and astrophysicists understand the formation and evolution of cosmic structures and make predictions about their properties.

What are some examples of generative models in biological systems?

Examples of generative models in biological systems include variational autoencoders (VAEs), generative adversarial networks (GANs), and recurrent neural networks (RNNs). These models are used to generate new sequences of DNA, simulate protein structures, and predict gene expression patterns.

What are some examples of generative models in cosmic systems?

Examples of generative models in cosmic systems include cosmological simulations, galaxy formation models, and stellar evolution models. These models are used to generate synthetic images of the universe, simulate the formation of galaxies, and predict the evolution of stars.